Robots.txt Generator by Alaikas

In the world of websites and search engines, visibility is everything. No matter how well-designed or informative your website is, it will not perform effectively if search engines cannot properly access and understand it. This is where technical SEO plays a critical role, and one of its most important components is the robots.txt file.

Managing a robots.txt file manually can be confusing, especially for beginners. A small mistake in the file can block important pages from being indexed or expose sensitive areas of your website. To simplify this process, tools like the robots.txt generator by Alaikas are designed to help users create accurate and effective robots.txt files without technical complexity.

What is a Robots.txt File?

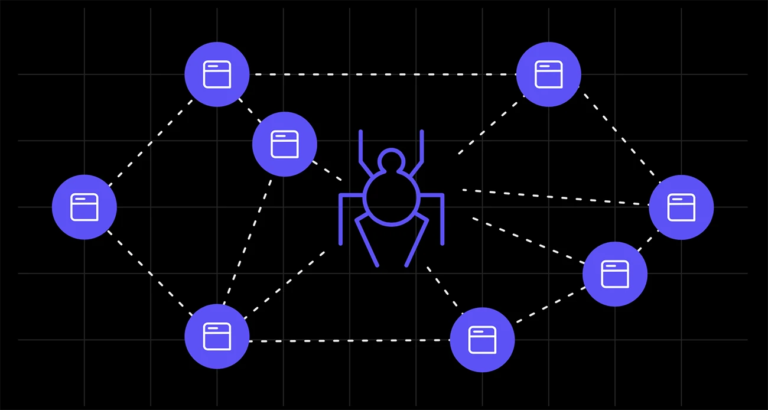

Before understanding the tool, it is important to know what a robots.txt file is. A robots.txt file is a simple text file placed in the root directory of a website. It provides instructions to search engine crawlers (also known as bots or spiders) about which pages or sections of the site they are allowed or not allowed to access.

For example, you may want search engines to index your blog posts but not your admin pages or private directories. The robots.txt file helps control this behavior. This file acts as a communication bridge between your website and search engines, guiding them on how to crawl your content efficiently.

What is Robots.txt Generator by Alaikas?

The robots.txt generator by Alaikas is an online tool that helps users create a properly structured robots.txt file quickly and easily. Instead of writing the file manually, users can use this tool to generate the correct format based on their needs.

The tool simplifies a technical process by offering a user-friendly interface where users can define rules for search engine crawlers. It ensures that the generated file follows standard guidelines and reduces the risk of errors. This makes it especially useful for beginners, small business owners, bloggers, and even experienced developers who want a quick and reliable solution.

Why is Robots.txt Important for SEO?

The robots.txt file plays a significant role in search engine optimization (SEO). First, it helps search engines crawl your website more efficiently. By guiding bots to important pages and blocking unnecessary ones, it improves crawl efficiency. Second, it protects sensitive or irrelevant sections of your website. Pages like admin panels, login areas, or duplicate content sections can be restricted using robots.txt.

Third, it prevents indexing of low-value pages. This ensures that search engines focus on your most important content, improving overall website performance in search results. Using a tool like the robots.txt generator by Alaikas ensures that these benefits are achieved without technical errors.

Key Features of Robots.txt Generator by Alaikas

The tool offers several features that make it practical and effective.

User-Friendly Interface

The tool is designed to be simple and easy to use, even for beginners.

Automatic File Generation

It creates a correctly formatted robots.txt file based on user input.

Error Reduction

By automating the process, the tool minimizes the risk of mistakes.

Customizable Rules

Users can define which pages or directories should be allowed or disallowed.

Fast and Efficient

The file is generated instantly, saving time and effort.

How the Robots.txt Generator Works

The working process of the robots.txt generator by Alaikas is straightforward. First, users access the tool and select the type of rules they want to apply. This may include allowing or disallowing specific pages, directories, or user agents. Next, the tool processes the input and generates a robots.txt file in the correct format.

Finally, users can copy the generated file and upload it to the root directory of their website. This simple process eliminates the need for manual coding and ensures accuracy.

Common Rules in Robots.txt File

Understanding common rules can help users use the tool more effectively.

User-agent

This specifies which search engine crawler the rule applies to. For example, Googlebot or all bots.

Disallow

This directive tells crawlers not to access certain pages or directories.

Allow

This allows specific pages to be access, even within restricted directories.

Sitemap

This provides the location of the website’s XML sitemap, helping search engines find important pages.

The robots.txt generator by Alaikas helps users implement these rules without needing deep technical knowledge.

Benefits of Using Robots.txt Generator by Alaikas

Using this tool provides several advantages.

Saves Time

Manual creation of robots.txt files can be time-consuming. The tool speeds up the process.

Reduces Errors

Automated generation ensures correct syntax and structure.

Beginner-Friendly

Even users with no technical background can create a robots.txt file.

Improves SEO Performance

Proper crawling and indexing improve search engine visibility.

Enhances Website Security

Restricting sensitive areas helps protect important data.

Best Practices for Using Robots.txt

To get the best results, users should follow certain best practices. Avoid blocking important pages that should be indexed. Always double-check the rules before uploading the file. Use the robots.txt file to manage crawl behavior, not to hide sensitive data completely. Sensitive information should be protected using proper security measures.

Regularly update the file as your website grows and changes. This ensures that search engines always have the correct instructions. Test the file using search engine tools to ensure it works as expected.

Common Mistakes to Avoid

While using robots.txt, users should avoid common mistakes. Blocking the entire website accidentally is one of the most serious errors. This can prevent search engines from indexing any pages. Incorrect syntax can also cause issues. Even small formatting errors can lead to unexpected results.

Relying solely on robots.txt for security is another mistake. It does not prevent direct access to restricted pages. The robots.txt generator by Alaikas helps reduce these risks by providing correct formatting and guidance.

Who Should Use Robots.txt Generator by Alaikas?

This tool is useful for a wide range of users.

Website Owners can manage how search engines interact with their site.

Bloggers can control which content is index.

SEO Professionals can optimize crawl efficiency and improve rankings.

Developers can quickly generate files without manual coding.

Beginners can create robots.txt files without technical knowledge.

Real-World Use Cases

The robots.txt generator by Alaikas can be use in many practical scenarios.

A business website may use it to block admin pages and private sections.

An e-commerce site may restrict duplicate product pages to improve SEO.

A blog may allow search engines to focus only on important posts and categories.

In each case, the tool helps users manage their website more effectively.

Future of Robots.txt Tools

The future of tools like the robots.txt generator by Alaikas is promising. As technology evolves, these tools may include AI-based suggestions for better optimization.

They may also integrate with other SEO tools to provide a complete website management solution. This will make it easier for users to optimize their sites without needing advanced technical knowledge. Alaikas is likely to continue improving its tools to meet the growing needs of users in the digital space.

Conclusion

The robots.txt generator by Alaikas is a valuable tool for anyone managing a website. It simplifies the process of creating robots.txt files, reduces errors, and improves overall website performance.

By guiding search engines on how to crawl and index content, it plays a crucial role in SEO and website management. Whether you are a beginner or an experienced professional, this tool provides a simple and effective way to handle an important technical task.